The Professor Who Thought Bold Text Was a Robot

Someone accused me of using AI to write my Reddit comments a while back.

Not because of what I said. Because of how I presented it.

I'd used bold text. And italics. To make the argument easier to follow.

This, apparently, was suspicious.

The person making the accusation teaches at a university.

I'll let that sit for a moment.

I formatted my replies carefully because I was trying to construct a clear argument and I had the basic courtesy to make it readable. This is, or was, when I attended school, considered a virtue. Something you aimed for. Something teachers encouraged.

Now it's evidence of cheating.

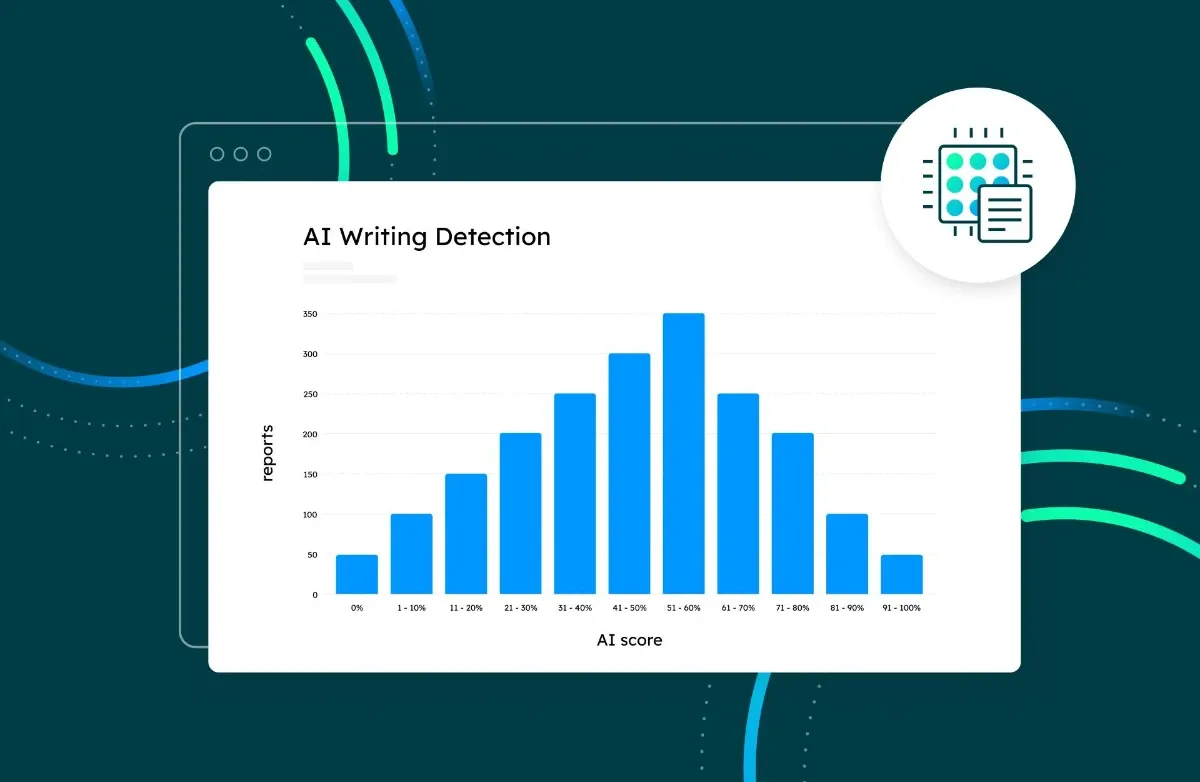

The thread was about AI detectors, specifically about whether they work. My position, supported by running Charles Dickens through five of them and watching him score 95% AI, was that they don't. The professor's position was that they do, and that my well-formatted counter-argument proved it.

There's a word for this. Circular isn't quite right. Deranged is closer.

While that exchange was still fresh, I read about the Australian Catholic University, which had been flagging students for academic misconduct based on the results of an AI detector. The tool it was using was Turnitin. In 2024 alone, nearly 6,000 cases were reported, around 90% of them AI-related.

Turnitin's own website warns that its detector should not be used as the sole basis for adverse actions against a student. The university used it as the sole basis anyway, and had known about its unreliability for over a year before finally abandoning it. Not before real students, who had done real work, had their futures held hostage by an algorithm that cannot reliably distinguish a human being from a piece of software.

One nursing student faced a six-month investigation and missed a graduate job opportunity. She had written every word herself.

These are not abstract stakes. These are people's degrees. Their careers. Their years of work, dismissed by a confidence score generated by software that would fail Charles Dickens.

And still, in the thread, some people defended the detectors.

I find myself wondering how teachers managed before any of this existed. How they assessed whether the work in front of them was genuine.

They read it.

They knew their students. They'd spent months in seminars with them, reading their earlier work, listening to them think out loud. When something felt wrong, it felt wrong because they had a basis for comparison. A relationship. A professional judgment built over time.

The detector replaces all of that with a number.

A number that punishes clarity. That flags structure as suspicious. That would rather see you write "a wax cup for hot tea" than "a chocolate teapot" because the worse version is harder to flag.

A number generated by software that is also, conveniently, selling you the solution to the problem it invented.

The professor who thought my formatting was a red flag is teaching students to write. Presumably teaching them to write clearly, with structure, with care for the reader.

And then running their work through a tool that treats those qualities as evidence of fraud.

I used bold text because I was trying to be helpful.

Apparently that's the tell.

We deserve everything that's coming to us.